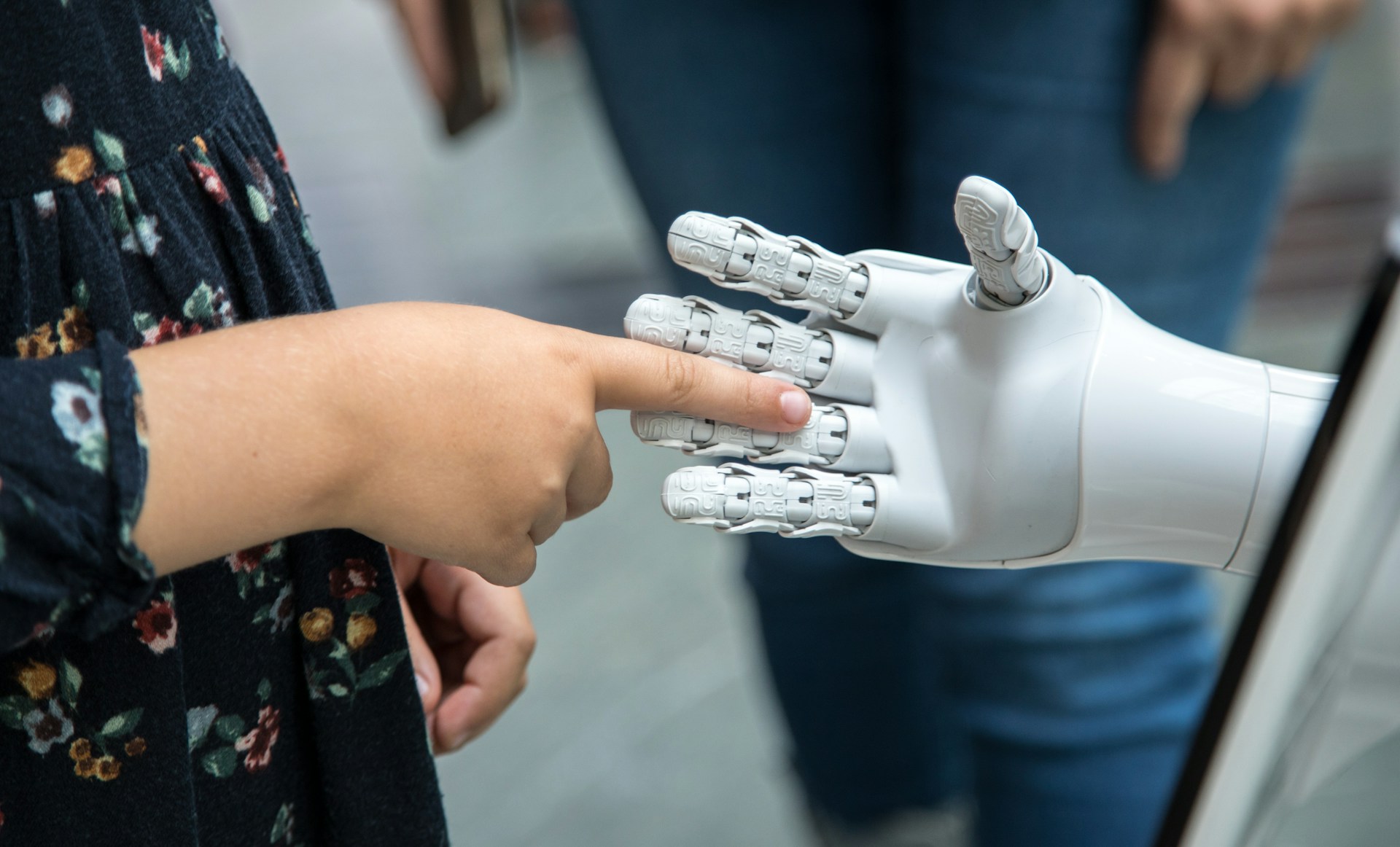

Anthropomorphising AI is rhetorically seductive but intellectually unsound. We must remember that the analogy between artificial intelligence and human intelligence is a distant one. Otherwise, we risk conflating computer systems with human-like agents and automation with autonomy. The anthropomorphisation of artificial intelligence – i.e. the attribution of human characteristics, intentions, or moral status to computational systems – has become a pervasive feature of public discourse, marketing (particularly in the private sector), and even some strands of academia. Popular narratives describe AI systems as entities that ‘think’ ‘understand’ or ‘want’, and conversational interfaces are explicitly designed to reinforce such impressions. The problem is that anthropomorphising AI is both conceptually mistaken and practically harmful. It obscures the technical limitations of AI systems, and misleads users about capabilities and risks, and ultimately cloaks moral reasoning behind syntax with no regard for semantics. More importantly, it confuses our understanding of what it is to be human: to be able to build authentic loving relationships as beings who are not only physical and mental (particularly with regard to our assumed rationality), but also emotional and spiritual.

Misrepresentation of Technical Capabilities

Anthropomorphising AI leads users to systematically overestimate what such systems can do. Statements such as ‘the model knows’, ‘the system decided’, or ‘ChatGPT says…’ suggest agency and human-like comprehension where neither exist. In reality, most deployed AI systems optimise objective functions (which in turn are defined by human designers), using training data curated – often imperfectly – by institutions with specific incentives (i.e. Meta, Alphabet, Anthropic, etc).

Researchers in machine learning have repeatedly emphasised this point – Professor Emily Bender from the University of Washington famously pointed out that large language models are ‘stochastic parrots’: systems that reproduce patterns in data without grounding in the world. While the phrase is polemical, it captures an important truth. The appearance of understanding is an emergent property of scale in datapoints and statistical regularity, not evidence of human-like cognition. Treating this appearance as reality risks unwarranted trust in AI outputs, particularly in high-stakes domains such as medicine, law, or public administration. This is not to diminish the significant potential of AI in such spheres but rather to acknowledge the inherent risks involved.

Contemporary AI systems, including large language models, are fundamentally artefacts: engineered systems that operate through statistical pattern recognition and optimisation. Mental states such as beliefs, intentions, or understanding belong to agents with human consciousness and intentionality, not hardware-intensive, software-executing algorithms. John Searle’s well-known critique of complex AI remains instructive here. Searle argued in his Chinese Room thought experiment that symbol manipulation alone does not constitute understanding: ‘Syntax is not sufficient for semantics’. When an AI system generates fluent language, it does not follow that it understands the content of that language. It cannot, because understanding in any normal sense requires human consciousness. Anthropomorphic descriptions blur this distinction, encouraging the false inference that linguistic competence entails cognitive or experiential depth.

The Issue of Moral Displacement and Confusion

There is also a further consequence of anthropomorphisation: When AI systems are framed as quasi-persons, responsibility subtly shifts away from human actors. Failures can be deflected to ‘the AI’, rather than to designers, deployers or institutions that selected training data, defined objectives and chose deployment contexts.

Anthropomorphising AI also affects how users relate to technology. Human beings are predisposed to attribute agency and emotion, particularly in interactive settings. Designers exploit this tendency through conversational cues, names and simulated empathy. While such design choices may improve user engagement, it remains an illusion that risks fostering emotional dependency or misplaced trust.

A recent article in The Economist illustrated some worrying concerns regarding the use of AI in early years education. Children growing up anthropomorphising AI companions that never express fatigue, frustration or any form of negative emotion is poor preparation for human relationships later in life. It is also emerging that users who perceive AI systems as social actors are more likely to disclose sensitive information and less likely to critically evaluate outputs, leaving children particularly vulnerable to the pitfalls. When a system appears to ‘care’ or ‘understand’, children may suspend scepticism. This is not merely a theoretical concern but has wider practical implications for privacy, manipulation and informed consent.

Christian Perspectives on Humanity and Love

Many ethical systems point to humanity which is more than its biochemical build. Aristotle himself saw the cultivation of intellectual and moral virtues as realising a distinctly human form of flourishing (i.e. eudaimonia), that transcends basic biological functioning. A Christian perspective would focus not only on the development of the whole human person, in body, mind and spirit, but in particular on the mystery of love. Christianity points to the formation of committed, loving relationships as the supreme human ability gifted to us from God – indeed, human beings were created for a loving, covenantal, transformative relationship with the Creator and with each other.

The transformative power of this intimate love between God and human beings is expressed in its fullness in Christ. The Triune God – Father, Son and Holy Spirit – is defined as three persons consubstantially rooted in the perfect relationship of selfless love. When the Son embraces full humanity in the incarnation, it is this interpersonal selfless love that becomes available to all. It is in Him, as the true image of God (Latin: imago Dei), that true humanity is revealed, and it fundamentally includes the question of love. This is indeed the uniqueness of the Christian faith: in Christ, divine, eternal and selfless interpersonal love is not only revealed as an external reality, but as fully available and accessible to every human being. Humanity in Christian thought is both interrelational and fundamentally defined by love. AI systems do not have the capacity to partake in this. These ethical issues are crucial and a misconceived engagement with AI will likely leave us face-to-face with many of the dangers discussed here.

Concluding Thoughts

A disciplined refusal to anthropomorphise AI is therefore not a matter of pedantry, but a prerequisite for technical accuracy, moral lucidity and responsible adoption. If AI is to be conscientiously integrated into our spheres of social and institutional life, it must be understood for what it is: a powerful class of tools created, constrained and deployed by humans that ought to be accountable to human values and institutions. Clear, non-anthropomorphic language would also support clearer policy. Describing AI systems as tools with specific affordances and limitations enables policymakers to focus on risk management, transparency and accountability, rather than non-existent computer semantics.

This is not a call for the adoption of a luddite lifestyle or indeed for all kinds of regulation to limit our use AI, but rather an effort to maintain proper perspective and understanding of a technology that is already permeating our daily lives.

Andrei E. Rogobete is Associate Director at the Centre for Enterprise, Markets & Ethics. For more information about Andrei please click here.